Index of rationalist groups in the Bay July 2024 — LessWrong

Published on July 26, 2024 4:32 PM GMTThe Bay Area rationalist community has an entry problem!...

End Single Family Zoning by Overturning Euclid V Ambler — LessWrong

Published on July 26, 2024 2:08 PM GMTOn 75 percent or more of the residential land...

Common Uses of "Acceptance" — LessWrong

Published on July 26, 2024 11:18 AM GMT“You should practise acceptance.”“What do you mean?”“You’re being too...

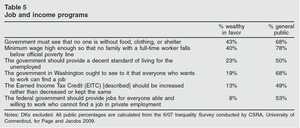

Universal Basic Income and Poverty — LessWrong

Published on July 26, 2024 7:23 AM GMT(Crossposted from Twitter)I'm skeptical that Universal Basic Income can...

A Solomonoff Inductor Walks Into a Bar: Schelling Points for Communication — LessWrong

Published on July 26, 2024 12:33 AM GMTA Solomonoff inductor walks into a bar in a...

What does a Gambler's Verity world look like? — LessWrong

Published on July 25, 2024 10:03 PM GMTStatus: Thought experiment for fun Imagine a world in which...

Pacing Outside the Box: RNNs Learn to Plan in Sokoban — LessWrong

Published on July 25, 2024 10:00 PM GMTWork done at FAR AI.There has been a lot...

Does robustness improve with scale? — LessWrong

Published on July 25, 2024 8:55 PM GMTAdversarial vulnerabilities have long been an issue in various...

Organisation for Program Equilibrium reading group — LessWrong

Published on July 25, 2024 7:11 PM GMT2024-07-25: I'm organising a little reading group, undecided between...

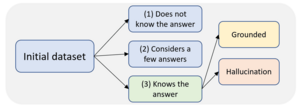

Constructing Benchmarks and Interventions for Combating Hallucinations in LLMs — LessWrong

Published on July 25, 2024 2:58 PM GMTThis is based on our recent preprint paper “Constructing...

"AI achieves silver-medal standard solving International Mathematical Olympiad problems" — LessWrong

Published on July 25, 2024 3:58 PM GMTGoogle DeepMind reports on a system for solving mathematical...

AlphaProof: an LLM to auto-formalize + AlphaZero self-trained to prove mathematical statements in Lean — LessWrong

Published on July 25, 2024 3:54 PM GMTThere doesn't seem to be an arxiv PDF out...

[Talk transcript] What “structure” is and why it matters — LessWrong

Published on July 25, 2024 3:49 PM GMTThis is an edited transcription of the final presentation...

AI #74: GPT-4o Mini Me and Llama 3 — LessWrong

Published on July 25, 2024 1:50 PM GMTWe got two big model releases this week. GPT-4o...

AI Constitutions are a tool to reduce societal scale risk — LessWrong

Published on July 25, 2024 11:18 AM GMTSammy Martin, Polaris VenturesAs AI systems become more integrated...

Determining the power of investors over Frontier AI Labs is strategically important to reduce x-risk — LessWrong

Published on July 25, 2024 1:12 AM GMTProduced as part of the ML Alignment & Theory...

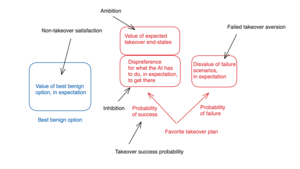

A framework for thinking about AI power-seeking — LessWrong

Published on July 24, 2024 10:41 PM GMTThis post lays out a framework I’m currently using...

Llama Llama-3-405B? — LessWrong

Published on July 24, 2024 7:40 PM GMTIt’s here. The horse has left the barn. Llama-3.1-405B,...

AI Safety Memes Wiki — LessWrong

Published on July 24, 2024 6:53 PM GMTExtensive collection of memes compiled by Victor Li and...

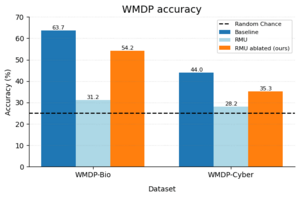

Unlearning via RMU is mostly shallow — LessWrong

Published on July 23, 2024 4:07 PM GMTThis is an informal research note. It is the...

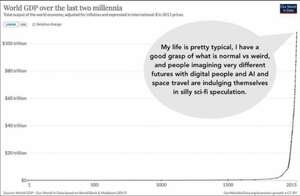

Monthly Roundup #20: July 2024 — LessWrong

Published on July 23, 2024 12:50 PM GMTIt is monthly roundup time. I invite readers who...

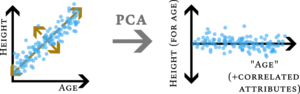

Confusing the metric for the meaning: Perhaps correlated attributes are "natural" — LessWrong

Published on July 23, 2024 12:43 PM GMTEpistemic status: possibly trivial, but I hadn't heard it...

Ransomware Payments Should Require a Sin Tax — LessWrong

Published on July 22, 2024 9:16 PM GMTCan a sin tax solve the ransomware problem? The...

My covid-related beliefs and questions — LessWrong

Published on July 23, 2024 3:27 AM GMTThings I'm fairly confident in: I should take colds...

Is there a Schelling point for group house room listings? — LessWrong

Published on July 23, 2024 3:03 AM GMTMy rationalist group house near Boston has a room...

Room Available in Boston Group House — LessWrong

Published on July 23, 2024 2:55 AM GMTWe have a room opening up in a rationalist...

D&D.Sci Scenario Index — LessWrong

Published on July 23, 2024 2:00 AM GMTThere have been a lot of D&D.Sci scenarios, but...

ML Safety Research Advice - GabeM — LessWrong

Published on July 23, 2024 1:45 AM GMTThis is my advice for careers in empirical ML...

Trying to understand Hanson's Cultural Drift argument — LessWrong

Published on July 22, 2024 8:20 PM GMTAt 2024's Manifest, Robin Hanson gave a talk (in...

Using an LLM perplexity filter to detect weight exfiltration — LessWrong

Published on July 21, 2024 6:18 PM GMTRecently, there has been discussion on how to make...

Would a scope-insensitive AGI be less likely to incapacitate humanity? — LessWrong

Published on July 21, 2024 2:15 PM GMTI was listening to Anders Sandberg talk about "humble...

Holomorphic surjection theorem (Picard's little theorem) — LessWrong

Published on July 21, 2024 1:24 PM GMTConsider an entire function (complex-differentiable everywhere) f(z).mjx-chtml {display: inline-block; line-height:...

aimless ace analyzes active amateur: a micro-aaaaalignment proposal — LessWrong

Published on July 21, 2024 12:37 PM GMTThis idea is so simple that I'm sure it's...

Pivotal Acts are easier than Alignment? — LessWrong

Published on July 21, 2024 12:15 PM GMTThe prevailing notion in AI safety circles is that...

Introduction to Modern Dating: Strategic Dating Advice for beginners — LessWrong

Published on July 20, 2024 3:45 PM GMTHeads up: This is not really a post about...

Ball Sq Pathways — LessWrong

Published on July 21, 2024 2:20 AM GMT With the Red Line shut down north of...

Freedom and Privacy of Thought Architectures — LessWrong

Published on July 20, 2024 9:43 PM GMTI don't work in cyber security, so others will...

Why Georgism Lost Its Popularity — LessWrong

Published on July 20, 2024 3:08 PM GMTHenry George’s 1879 book Progress & Poverty was the...

A more systematic case for inner misalignment — LessWrong

Published on July 20, 2024 5:03 AM GMTThis post builds on my previous post making the...

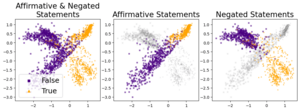

Truth is Universal: Robust Detection of Lies in LLMs — LessWrong

Published on July 19, 2024 2:07 PM GMTA short summary of the paper is presented below.TL;DR:...

Sustainability of Digital Life Form Societies — LessWrong

Published on July 19, 2024 1:59 PM GMTHiroshi Yamakawa1,2,3,41 The University of Tokyo, Tokyo, Japan2 AI Alignment Network,...

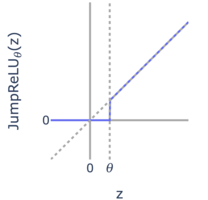

JumpReLU SAEs + Early Access to Gemma 2 SAEs — LessWrong

Published on July 19, 2024 4:10 PM GMTNew paper from the Google DeepMind mechanistic interpretability team,...

Romae Industriae — LessWrong

Published on July 19, 2024 1:03 PM GMTWhatever each culture grows and manufactures cannot fail to...

Have people given up on iterated distillation and amplification? — LessWrong

Published on July 19, 2024 12:23 PM GMTThe BlueDot Impact write-up for scalable oversight seems to...

How do we know that "good research" is good? (aka "direct evaluation" vs "eigen-evaluation") — LessWrong

Published on July 19, 2024 12:31 AM GMTAI Alignment is my motivating context but this could...

Linkpost: Surely you can be serious — LessWrong

Published on July 18, 2024 10:18 PM GMTAdam Mastroianni writes about "actually caring about stuff, and...

My experience applying to MATS 6.0 — LessWrong

Published on July 18, 2024 7:02 PM GMTThe current cohort of the ML Alignment & Theory...

What are the actual arguments in favor of computationalism as a theory of identity? — LessWrong

Published on July 18, 2024 6:44 PM GMTA few months ago, Rob Bensinger made a rather...

Yet Another Critique of "Luxury Beliefs" — LessWrong

Published on July 18, 2024 6:37 PM GMTI know little about Rob Henderson except that he...

Individually incentivized safe Pareto improvements in open-source bargaining — LessWrong

Published on July 17, 2024 6:26 PM GMTSummaryAgents might fail to peacefully trade in high-stakes negotiations....