In a new Metrics, and also in Appmetrica instead of the "fixed" reports, each report can be changed arbitrarily. It is possible to add new measurements (for example, to add to the report on search phrases still splitting according to pages of an input into the website), to segment and compare (it is possible to compare traffic sources on the website for all visitors and visitors from Moscow), to change a set of metrics and so on. Of course, it demands absolutely different approaches to data storage.

MyISAM

Right at the beginning the Metrics was created as Direkt's part. In Direkta for a solution of a problem of storage of statistics table MyISAM were used, and we began with it too. We used MyISAM for storage of the "fixed" reports from 2008 to 2011. Give, I will tell what has to be structure of the table for the report, for example, on geography. The report is shown for the specific website (more precisely, numbers of the counter of the Metrics). Means, the primary key has to include number of the counter — CounterID. The user can select any reporting period. It would be unreasonable to save data for each couple of dates therefore they remain for each date and then at request are summed up for the set interval. That is the primary key includes date — Date. In the report data are displayed for regions in the form of a tree from the countries, areas, the cities, or in the form of the list. It is reasonable to place the identifier of the region (RegionID) in primary key of the table, and to collect data in a tree already on the party of an applied code, but not the database. Still the average duration of visit is considered, for example. Means, in columns of the table there has to be a number of visits and total duration of visits. As a result, structure of the table such: CounterID, Date, RegionID-> Visits, SumVisitTime, … Let's consider what occurs when we want to receive the report. The request is made SELECT with a condition WHERE CounterID = c AND Date BETWEEN min_date AND max_date. That is there is a reading on the range of primary key.

How data on a disk are really stored?

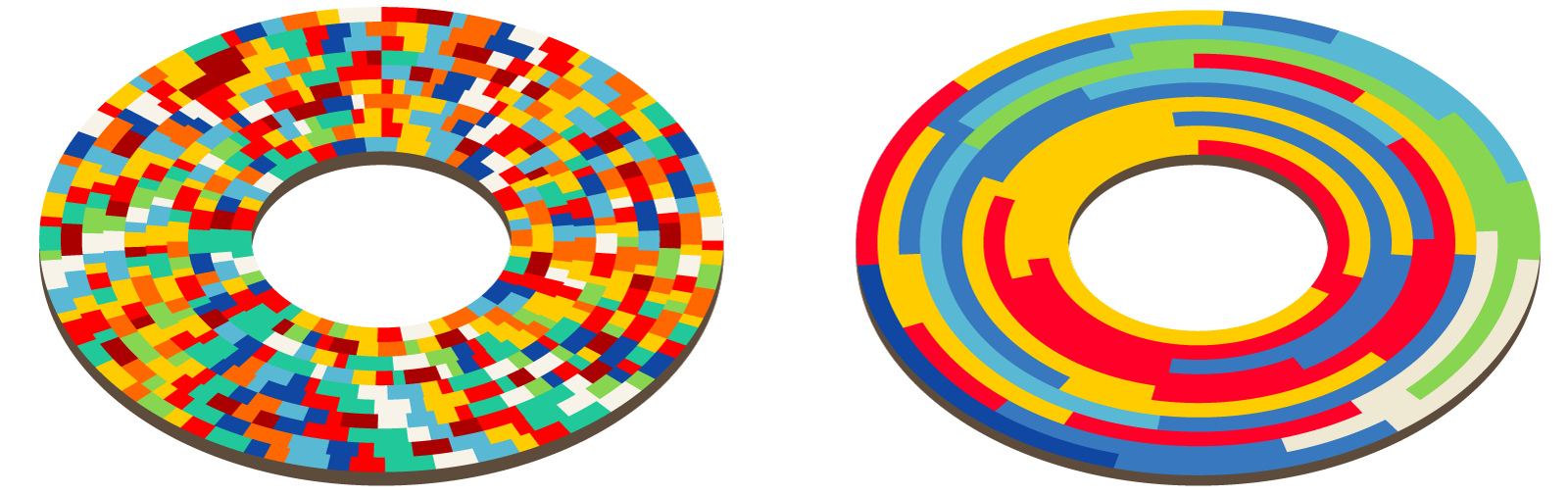

MyISAM the table represents the file with data and the file with indexes. If from the table nothing was removed and lines did not change the length when updating, then the file with data will represent the serialized lines laid in a row as their adding. The index (including, primary key) represents a B-tree in which leaves there are shifts in the file with data. When we read data on index range, from an index the set of shifts in the file with data gets. Then on this set of shifts readings are made of the file with data. Let's assume a natural situation when the index is in a RAM (key cache in MySQL or system page cache), and data are not cached in it. Let's assume that we use hard drives. Time for data reading depends on what data volume needs to be read and how many it is necessary to make seek-. The quantity of seek-is defined by locality of an arrangement of data on a disk. Events come to the Metrics as it should be, almost appropriate time of events. In this entering flow data of different counters are scattered absolutely arbitrarily. That is, the entering data of a lokalna on time, but not lokalna according to number of the counter. At record in MyISAM the table data of different counters will be also located absolutely in a random way, and it means that for data reading of the report it will be necessary to execute approximately so many accidental readings how many is lines necessary to us in the table. The normal hard drive 7200 RPM is able to execute from 100 to 200 accidental readings per second, the RAID at competent use — is proportional more. One SSD of five-year prescription is able to execute 30 000 accidental readings per second, but we are not able to afford to store our data on SSD. Thus, if for our report it is necessary to read 10 000 lines, then it will hardly take less than 10 seconds that is completely unacceptable.InnoDB as in InnoDB the clustering primary key is used is suitable for readings on the range of primary key better (that is, data are stored it is arranged on primary key). But InnoDB could not be used because of low writing rate. If reading this text, you remembered about TokuDB, then continue to read this text.

In order that MyISAM worked quicker at the choice on the range of primary key, some tricks were applied.Sorting of the table. As data need to be updated incrementally, to once sort the table insufficiently, and it is impossible to sort its every time. Nevertheless, it can be done periodically for old data.

Partitsionirovaniye. The table breaks into a quantity of smaller on ranges of primary key. At the same time there is a hope that data of one partition will be stored more or less locally, and requests on the range of primary key will quicker work. This method it is possible to call "clustering primary key manually". At the same time the insert of data is a little slowed down. Selecting quantity of partition, as a rule, it is possible to reach a compromise between the speed of inserts and readings.

Separation of data into generations. At one scheme of a partitsionirovaniye can slow down too readings, at another — inserts, and at intermediate — both that, and another. In this case separation of data into several generations is possible. For example, we will call first generation operational data — there the partitsionirovaniye is made as an insert (on time) or not made in general. We will call the second generation contemporary records — there it is made as reading (according to number of the counter). Data are transferred from generation to generation by a script, but is not too frequent (for example, once a day). Data are read out from all generations at once. It, as a rule, helps, but adds very many difficulties.

All these tricks (and some other) were used in Yandex. To a metrics once long ago in order that everything somehow worked. We summarize what shortcomings are available:- locality of data on a disk is very difficult maintained;

- tables are blocked at data record;

- replication works slowly, remarks often lag behind;

- consistency of data after failure is not provided;

- such units as the number of unique users, are very difficult calculated and stored;

- it is difficult to use data compression; it works inefficiently;

- indexes take a lot of place and often are not located in a RAM;

- data need to be shardirovat manually;

- many calculations should be done on the party of an applied code after SELECT - and;

- difficult operation.

Locality of data on a disk, figurative representation

In general use of MyISAM was extremely inconvenient. In the afternoon servers worked with 100% load of disk arrays (permanent movement of heads). In such conditions disks fail more often than it is normal. On servers we used disk regiments (16 disks) — that is, quite often it was necessary to recover RAIDs. At the same time replication of an otstavl is even more and sometimes the remark had to be poured again. Switching of the master is extremely inconvenient. For the choice of a remark for which requests are sent we used MySQL Proxy, and this use was very unsuccessful (then we replaced it with HAProxy). Despite these shortcomings, as of 2011 we stored in MyISAM tables more than 580 billion lines. Then everything was converted in Metrage, deleted and as a result released many servers.Metrage

We use Metrage for storage of the fixed reports since 2010 till present. Let's assume, you have the following scenario of work:- data constantly register in base small batch-a;

- flow on record rather big — several hundred thousands of lines per second;

- it is a little requests for reading — tens-hundreds requests per second;

- all readings — on the range of primary key, to millions of lines on one request;

- lines rather short — about 100 bytes in an uncompressed type.

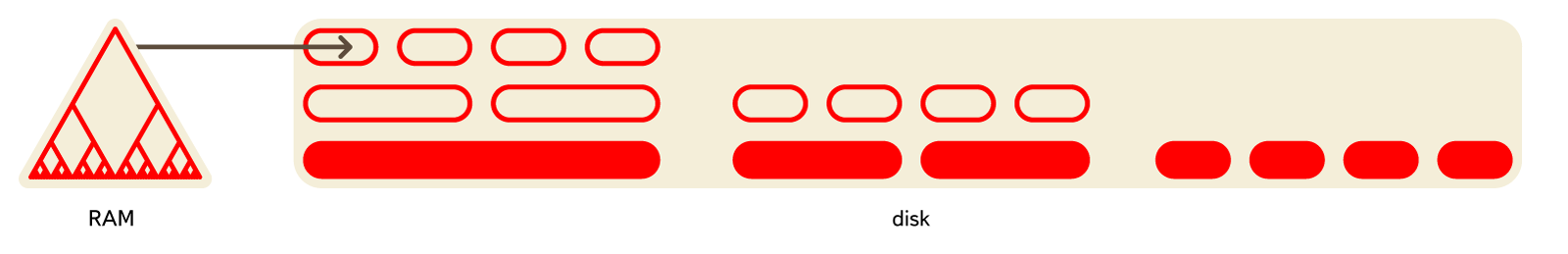

Rather widespread data structure of LSM-Tree well is suitable for it. It represents rather small set of "pieces" of data on a disk, each of which contains the data sorted by primary key. New data at first are had in any data structure in a RAM (MemTable), then register in a disk in the new sorted piece. Periodically in a background of several sorted pieces integrate in one larger sorted (compaction). Thus rather small set of pieces is constantly supported.

Among the built-in data structures of LSM-Tree implement LevelDB, RocksDB. It is used in HBase and Cassandra.

Metrage also represents LSM-Tree. As "lines" in it any data structures can be used (are fixed at a compilation stage). Each line is a couple a key, value. The key is a structure with operations of comparison on equality and an inequality. Value — any structure with the operations update (to add something) and merge (to aggregate, integrate with other value). To put it briefly, it is CRDT.

Can act as values as the simple structures (a train of numbers) and more difficult (a hash table for calculation of number of unique visitors, structure for the card of clicks). By means of the operations update and merge incremental aggregation of data is constantly executed:- during an insert of data, when forming a new pack in a RAM;

- during background merges;

- at requests for reading.

- Data are located rather locally on the hard drive, readings on the range of primary key work quickly.

- Data contract on blocks. Due to storage in the arranged type, compression rather strong when using fast shrinking algorithms (in 2010 used QuickLZ, since 2011 we use LZ4).

- Data storage in the arranged type allows to use the rarefied index. The rarefied index is an array of values of primary key for each N-oh line (N about thousands). Such index turns out the most compact and is always located in a RAM.

OLAPServer

In Yandex. To a metrics the data volume and value of loading are rather big that the main problem was to make a solution which at least works — solves a problem and at the same time copes with loading within adequate quantity of computing resources. Therefore often the main efforts are wasted on creating the minimum working prototype. OLAPServer was one of such prototypes. We used OLAPServer from 2009 to 2013 as a data structure for the designer of reports. Our task — to receive any reports which structure becomes known only while the user wants to receive the report. For this purpose it is impossible to use preaggregated data because it is impossible to provide all combinations of measurements, metrics, conditions in advance. So, it is necessary to store non-aggregated data. For example, for the reports calculated on the basis of visits it is necessary to have the table where to each visit there will correspond the line, and to each parameter in which it is possible to calculate the report — a column. We have such scenario of work:- there is wide "fact table" containing a large number of columns (hundreds);

- when reading rather large number of lines from a DB, but only a small subset of columns is taken out;

- requests for reading go rather seldom (normally no more than one hundred per second on the server);

- at execution of simple requests delays around 50 ms are admissible;

- values in columns rather small — numbers and small lines (an example — 60 bytes on URL);

- high flow capacity when processing one request is required (to billions of lines per second on one server);

- the result of execution of request is significantly less than basic data — that is, data are filtered or aggregated;

- rather simple scenario of data-refresh, batch-ama append-only is normal; there are no difficult transactions.

For such scenario of work (we will call him OLAP the scenario of work), column DBMS (column-oriented DBMS) in the best way approach. So DBMS in which data for each column are stored separately, and data of one column — together are called. Column DBMS effectively work for OLAP of the scenario of work on the following reasons: 1. On I/O.

- For execution of analytical request it is required to read a small amount of columns of the table. In a column DB for this purpose it is possible to read only the necessary data. For example, if you need only 5 columns from 100, then it is necessary to expect 20-fold reduction of input-output.

- As data are read by packs, it is simpler to squeeze them. The data lying on columns contract also best of all. At the expense of it the amount of input-output in addition decreases.

- Due to reduction of input-output, more data get into a system cache.

As for execution of request it is necessary to process rather large number of lines, becomes actual to dispetcherizovyvat all operations not for separate lines, and for the whole vectors (an example — the vector engine in VectorWise DBMS) or to implement the engine of execution of request so that costs for scheduling were approximately zero (an example — a kodogeneration by means of LLVM in Cloudera Impala). If not to do it, then at any not too bad disk subsystem the interpreter of request will inevitably rest against CPU. It makes sense not only to store data on columns, but also to process them whenever possible too on columns.

There are many column DBMS. It is, for example, Vertica, Paraccel (Actian Matrix) (Amazon Redshift), Sybase IQ (SAP IQ), Exasol, Infobright, InfiniDB, MonetDB (VectorWise) (Actian Vector), LucidDB, SAP HANA, Google Dremel, Google PowerDrill, Metamarkets Druid, kdb +, etc.

In traditionally line DBMS lately solutions for data storage on columns began to appear too. Examples — column store index in MS SQL Server, MemSQL, cstore_fdw for Postgres, the ORC-File and Parquet formats for Hadoop.

OLAPServer represents the simplest and extremely limited implementation of the column database. So OLAPServer supports only one table set in compile time — the table of visits. Data-refresh becomes not in real time as everywhere in the Metrics, and several times in days. As data types only numbers of the fixed length of 1-8 bytes are supported. And as request only the option is supported SELECT keys..., aggregates... FROM table WHERE condition1 AND condition2 AND... GROUP BY keys ORDER BY column_nums....

ClickHouse

Using OLAPServer, we managed to understand, how well column DBMS cope with a problem of ad-hoc of analytics of non-aggregated data. If any report can be received according to non-aggregated data, then there is a question whether it is necessary to predagregirovat in general data in advance how we do it, using Metrage? On the one hand, preaggregation of data allows to reduce data volume, used directly at the time of loading of the page with the report. On the other hand, aggregated data are very limited solution. The reasons are following:- you have to foreknow the list of the reports necessary for the user;

- that is, the user cannot construct any report;

- at aggregation on a large number of keys data volume does not decrease and aggregation is useless;

- at a large number of reports too many options of aggregation (combinatorial explosion) turn out;

- at aggregation on keys of high cardinality (for example, URL) data volume decreases not strongly (less than twice);

- because of it data volume at aggregation can not decrease, and grow up;

users will watch not all reports which we for them will count. — that is, the most part of calculations is useless; - it is difficult to maintain logical integrity at storage of a large number of different aggregations.

Recently as an alternative to commercial column DBMS solutions for effective ad-hoc of analytics according to the data which are in systems of distributed computing began to appear: Cloudera Impala, Spark SQL, Presto, Apache Drill. Though such systems can effectively work at requests for internal analytical tasks, it is rather difficult to provide them as a backend for the web interface of the analytical system available to external users.

In Yandex the column DBMS — ClickHouse is developed. Let's consider the main requirements which we to it had before starting development.Ability to work with big data. In new Yandex. To a metrics of ClickHouse it is used for storage of all data for reports. Database amount for December, 2015 made 11,4 trillion lines (and it only for a big Metrics). Lines — non-aggregated data which are used for receipt of reports in real time. Each line contains more than 200 columns in the largest tables.

The system has to be scaled linearly. ClickHouse allows to increase cluster size by adding of new servers as required. For example, main cluster of Yandex. Metrics it was increased from 60 to 394 servers within two years. For fault tolerance, servers are located in different data-centers. ClickHouse can use all opportunities of iron for processing of one request. Speed more than 1 terabyte per second is so reached (data after a release, the columns only used).

High performance of work. High performance of base is our separate subject of pride. By results of internal tests, ClickHouse processes requests quicker, than any other system which we could get. For example, ClickHouse is on average 2,8-3,4 times faster, than Vertica. One silver bullet at the expense of which the system works so quickly is not in ClickHouse.

Functionality has to be sufficient for web analytics tools. The base supports SQL language dialect, subqueries and JOIN-y (local and distributed). There are numerous SQL expansions: functions for web analytics, arrays and the enclosed data structures, functions of the higher order, aggregate functions for approximate calculations by means of sketching, etc. During the work with ClickHouse you receive convenience of relational DBMS.

ClickHouse is developed in command of Yandex. Metrics. At the same time the system managed to be made rather flexible and expanded in order that it could be used successfully for different tasks. Though the base is capable to work at clusters of the big size, it can be installed on one server or even on the virtual computer. Now there are more than ten applications of ClickHouse in the company. ClickHouse well is suitable for creation of various analytical tools. Really, if the system successfully copes with tasks big Yandex. Metrics, it is possible to be sure what with other problems of ClickHouse will cope with a repeated stock on performance. In this sense Appmetrica was especially lucky — when it was in development, ClickHouse was already ready. For data handling of analytics of applications we just made one program which takes the entering data and after small processing writes them in ClickHouse. Any functionality available in the Appmetrica interface, represents just Select query. ClickHouse is used for storage and the analysis of logs of different services in Yandex. The typical solution would be to use Logstash and ElasticSearch, but it does not work at more or less decent data stream.ClickHouse is suitable as the database for time series — so, in Yandex it is used as a backend for Graphite instead of Ceres/Whisper. It allows to work more than with one trillion metrics on one server.

ClickHouse use analysts for internal tasks. By experience of use in the company, overall performance of ClickHouse in comparison with traditional methods of data handling (scripts on MR) is about three orders higher. It cannot be considered as simply quantitative difference. The matter is that having such high speed of calculation, it is possible to afford essentially other methods of a solution of tasks. If the analyst received a task to make the report and if it is the good analyst, then it will not do one report. Instead he at first will receive ten other reports better to study the nature of data and to check the hypotheses arising at the same time. Often it makes sense to look at data under different corners, even without having at the same time any accurate purpose — to find new hypotheses and to check them. It is possible only if the speed of data analysis allows to conduct researches in an interactive mode. The quicker requests are executed, the more hypotheses can be checked. During the work with ClickHouse there is such feeling as though you increased thinking speed. In traditional systems data, figuratively speaking lie a dead load at the bottom of a bog. With them it is possible to make anything, but it will take a lot of time and it will be very inconvenient. And if your data lie in ClickHouse, then these are "live" data: you can study them in any cuts and "drill" to each separate line.Outputs

So it turned out that Yandex. The metrics is the second-large system of web analytics in the world. The amount of the data coming to the Metrics grew from 200 million events a day at the beginning of 2009 to a little more than 20 billion in 2015. To give to users rather rich opportunities, but at the same time not to cease to work under the increasing loading, we had to change approach to the organization of data storage constantly. Efficiency of use of iron is very important for us. By our experience, at large volume of data it is worth worrying not about as far as the system is well scaled, and about that, each unit of resources is how effectively used: each main core, disk and SSD, RAM, network. If your system already uses hundreds of servers, and you need to work ten times more effectively, then hardly you will be able easily to install thousands of servers, as if well the system was not scaled. Specialization under a specific class of tasks is important for achievement of maximum efficiency. There is no data structure which well copes with absolutely different scenarios of work. For example, it is obvious that key-value base will not be suitable for analytical requests. The heavy load on system, the big specialization will be required, and you should not be afraid to use essentially different data structures for different tasks.

We managed to make so that Yandex. The metrics is rather cheap on iron. It allows to provide free service even for the largest websites and mobile applications. On this field at Yandex. There is no metrics competitors. For an example if you have a popular mobile application, then you can use Yandex free of charge. A metrics for applications even if your application is more popular, than Yandex. Cards.

This article is a translation of the original post at habrahabr.ru/post/273305/ If you have any questions regarding the material covered in the article above, please, contact the original author of the post.

If you have any complaints about this article or you want this article to be deleted, please, drop an email here: sysmagazine.com@gmail.com.